Software RAID has been relatively simple to use for a long time as it mostly just works. Things are less straightforward when using UEFI as you need an EFI partition that can’t be on a software RAID.

Well, you could put the EFI partition in a software RAID if you put the metadata at the end of the partition. That way the beginning of the partition would be the same as without RAID. The issue with this is if something external writes to the partition as you can’t be sure which drive has the correct state. That’s why we’re going to use another approach.

Instead of putting it on a RAID, we’ll install Ubuntu as usual, and then copy the EFI partition over to the second drive. Then we’ll make sure that either of the two hard drives can go away without affecting the ability to run or boot. We’re going to use the efibootmgr tool to make sure both drives are in the boot-list.

I’ll also add some info on how to handle drive replacements and updates affecting EFI. The steps also work if you are using Ubuntu 22.04.

So let’s get on with it.

Installing Ubuntu 24.04 with RAID

First, let’s install Ubuntu and get a step closer to our goal of RAID1. When you get to the storage configuration screen, select “Custom storage layout” and follow these steps:

- Reformat both drives if they’re not empty.

- Mark both drives as a boot device. Doing so will create an ESP(EFI system partition) on both drives.

- Add an unformatted GPT partition to both drives. They need to have the same size. We’re going to use those partitions for the RAID that contains the OS.

- Create a software RAID(md) by selecting the two partitions you just created for the OS.

- Congratulations, you now have a new RAID device. Let’s add at least one GPT partition to it.

- Optional: If you want the ability to swap, create a swap partition on the RAID device. Set the size to the same as your RAM, or half if you have 64 GB or more RAM.

- Create a partition for Ubuntu on the RAID device. You can use the remaining space if you want to. Format it as ext4 and mount it at /.

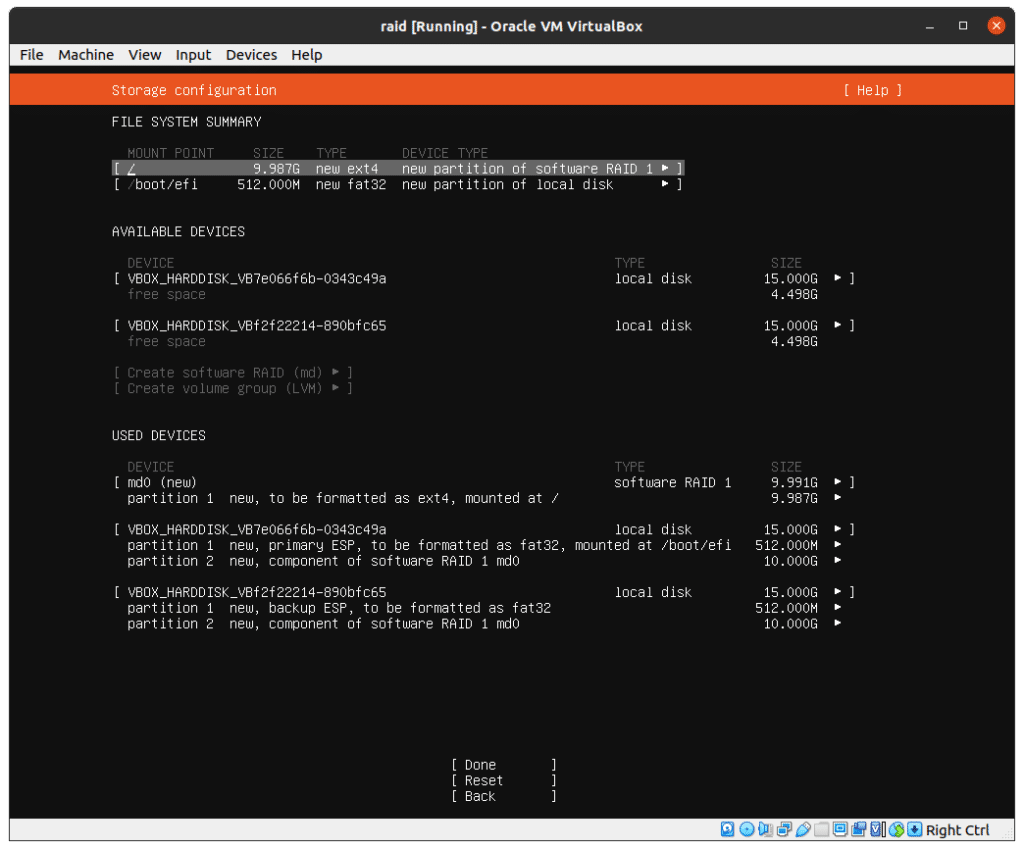

When you have followed the steps above, this is what it will look like:

Save the changes and continue along with the installation.

Make sure both drives are bootable

Congratulations, you now have a redundant setup! You can check the status of the RAID by running the following:

$ sudo mdadm --detail /dev/md0If the RAID has completed syncing you’ll be able to crash or remove one drive and run off of the remaining hard drive.

However, while this is fine, there is one potential lurking issue. If you remove one drive, you might be unable to boot the system. So let’s make sure the ESP is the same on both drives, and that the system will try to boot from either of the hard drives and not just one. Ubuntu’s installer should have taken care of this for you, but feel free to check.

First, show the partition UUIDs:

$ ls -la /dev/disk/by-partuuid/

total 0

drwxr-xr-x 2 root root 120 Oct 1 22:43 .

drwxr-xr-x 7 root root 140 Oct 1 22:43 ..

lrwxrwxrwx 1 root root 10 Oct 1 22:43 04d1fc28-4747-497b-9732-75f691a7ae7a -> ../../sdb2

lrwxrwxrwx 1 root root 10 Oct 1 22:43 0577b983-cf0a-4516-a3ab-92e19c3e9afe -> ../../sda1

lrwxrwxrwx 1 root root 10 Oct 1 22:43 97eecdcd-8ec3-4b8e-a6d9-1114d3baa75b -> ../../sda2

lrwxrwxrwx 1 root root 10 Oct 1 22:43 98d444f0-df7f-41d9-8461-95ca566bd3a7 -> ../../sdb1Take note of the UUIDs belonging to the first partition on both drives. In this case, it’s the ones starting with 0577b983(sda1) and 98d444f0(sdb1).

Next, check what drive you’re currently using:

$ mount | grep boot

/dev/sdb1 on /boot/efi type vfat (rw,relatime,fmask=0022,dmask=0022,codepage=437,iocharset=iso8859-1,shortname=mixed,errors=remount-ro)As you can see, we’re currently using sdb1, so that’s working. Let’s copy it over to sda1:

$ sudo dd if=/dev/sdb1 of=/dev/sda1Now we have a working ESP on both drives, so the next step is to make sure both ESP exists in the boot-list:

$ efibootmgr -v

BootCurrent: 0005

Timeout: 0 seconds

BootOrder: 0001,0005,0006,0000,0002,0003,0004

Boot0000* UiApp FvVol(7cb8bdc9-f8eb-4f34-aaea-3ee4af6516a1)/FvFile(462caa21-7614-4503-836e-8ab6f4662331)

Boot0001* UEFI VBOX CD-ROM VB2-01700376 PciRoot(0x0)/Pci(0x1,0x1)/Ata(1,0,0)N…..YM….R,Y.

Boot0002* UEFI VBOX HARDDISK VBf2f22214-890bfc65 PciRoot(0x0)/Pci(0xd,0x0)/Sata(0,65535,0)N…..YM….R,Y.

Boot0003* UEFI VBOX HARDDISK VB7e066f6b-0343c49a PciRoot(0x0)/Pci(0xd,0x0)/Sata(1,65535,0)N…..YM….R,Y.

Boot0004* EFI Internal Shell FvVol(7cb8bdc9-f8eb-4f34-aaea-3ee4af6516a1)/FvFile(7c04a583-9e3e-4f1c-ad65-e05268d0b4d1)

Boot0005* ubuntu HD(1,GPT,98d444f0-df7f-41d9-8461-95ca566bd3a7,0x800,0x100000)/File(\EFI\ubuntu\shimx64.efi)

Boot0006* ubuntu HD(1,GPT,0577b983-cf0a-4516-a3ab-92e19c3e9afe,0x800,0x100000)/File(\EFI\ubuntu\shimx64.efi)You should see two entries called Ubuntu. Make sure the UUIDs are the same as the two you took note of earlier.

If an entry is missing, you’ll need to add it.

Example of how to add an entry for the UUID starting with 0577b983(sda1) if it’s missing:

$ sudo efibootmgr --create --disk /dev/sda --part 1 --label "ubuntu" --loader "\EFI\ubuntu\shimx64.efi"You should now be able to remove any of the two drives and still boot the system.

Adding a fresh drive after a failure

So, a drive has failed, and you’ve replaced it with a new one. How do you set it up?

First, find the new drive:

$ sudo fdisk -lIt’s probably one without any partitions. Make sure you’re using the right drive. In my case, it’s /dev/sdb, so I’ll want to back up the partition table from /dev/sda and write it to /dev/sdb.

Change the source to the existing drive and dest to the new one:

$ source=/dev/sda

$ dest=/dev/sdbCreate a backup in case you mix it up:

$ sudo sgdisk --backup=backup-$(basename $source).sgdisk $source

$ sudo sgdisk --backup=backup-$(basename $dest).sgdisk $destCreate a replica of the source partition table and then grenerate new UUIDs for the new drive:

$ sudo sgdisk --replicate=$dest $source

$ sudo sgdisk -G $destStart syncing the raid, replace the X with the correct partition(it’s 2 for me):

$ sudo mdadm --manage /dev/md0 -a $(echo "$dest"X)Now, copy over the ESP(replace X with the correct partition, it’s 1 for me):

$ sudo dd if=$(echo "$source"X) of=$(echo "$dest"X)Then list the current drive UUIDs:

$ ls -la /dev/disk/by-partuuid/Then show the boot-list:

$ efibootmgr -vTake note of the BootOrder in case you want to change it.

If any of the ubuntu entries points to a UUID that currently don’t exist, delete it(replace XXXX with the ID form the boot-list):

$ sudo efibootmgr -B -b XXXXIf any of the current UUIDs for partition 1 on the drives don’t exist in the boot-list, add it(replace the X with the drive that is missing):

$ sudo efibootmgr --create --disk /dev/sdX --part 1 --label "ubuntu" --loader "\EFI\ubuntu\shimx64.efi"Verify that it’s correct:

$ efibootmgr -vAll good? Great! You now have a working RAID again.

22 replies on “Ubuntu 24.04 with software RAID1 and UEFI”

Thank you the great guide. I installed 20.04.2 per the guide. Just a couple problems:

1) mount does not show anything under efi or boot so I cannot perform the partition copy.

2) efibootmgr says EFI variables are not supported on this system

Suggestions?

No problem! If you followed the installation steps, then Ubuntu should mount /boot/efi. Considering that you also got that efibootmgr error, can it be that you didn’t use UEFI?

Great article. I have been Googling the hecks out of this subject. To be clear, this is a desktop computer with multiple daily backup targets so it was basically “disposable”. I initially wanted to use my onboard RAID but got a rude surprise when I booted with the RAID1 config and was told by Ubuntu that I had to turn off the RST RAID configuration so after reading a few articles on software vs. hardware RAID I gave in and went with software RAID. Other articles always seemed to be missing something but this was the simplest and most logical. Thanks for taking the time to post it. I’m now going to blow it all up and try to redo it with RAID1 😉

Thanks! I prefer software RAID to the onboard RAID. Even if the onboard RAID does some offloading, most computers are so fast now that it doesn’t matter if it’s done in software. Good luck with the RAID. 🙂

How would you go about replacing two HDD’s setup as software RAID 1 with two SDD’s of the same size? Can you just ‘fail’ one of the HDD’s, remove it, add the SDD, let it sync and then do the same for hte other?

Thanks,

Steve

I’ve never tried it, but I don’t see why you shouldn’t be able to do that.

You may add the third drive to raid1, to not loose a redundancy, then after it is synced, remove the one you do not need anymore, continue the same with the second one. You can migrate running system to completely deferent storage this way without downtime.

I have one big problem: once added drives as boot drives I can’t create an md raid with the remaining partions OR if I create the md raid1 I can’t add the drives as boot drives. It somewhat drives me mad (pardon the pun) ;-)…

It might be helpful to compare it to the picture in the post to see if there is any difference.

What happens when there’s an update to this type of install that needs to update the efi partition? Are there steps to fix that?

I could probably be more specific in the post about EFI updates. Updates might mess it up, but I haven’t experienced it myself on my servers. I do, however, check that the entries are still present with efibootmgr -v and that the IDs of the drives are still matching to make sure.

I might try some older unpatched versions of Ubuntu and see if that has any effect.

thanks, great tips. I installed ubuntu on a few HP servers and ubuntu doesn’t support HP Raid contollers. Had to use Soft Raid and able to clone the installation across servers quickly by this way.

Will this work on a legacy bios system? If so what is different with the setup?

No, it won’t. This is specifically for those using UEFI.

Excellent guide! One additional item I’m looking to accomplish is to keep the ESP partitions in-sync if any updates are made to the active ESP. I’m looking to accomplish this by adding a hook into dpkg (used by apt) which will run a script to identify if a change has been made to the active ESP and then dd/rsync the changes to the other “inactive” ESPs. Do you have any suggestions/pointers on how to create such script?

I was considering making something that ensures it stays in sync, but I haven’t gotten around to it yet.

Great article! One part I’m confused about is the fact that my /etc/fstab file has a single reference to only one UUID, shouldn’t I add every possible one to this file?

/dev/disk/by-uuid/61A1-AF01 /boot/efi vfat defaults 0 1

Or do all the disks have the same UUID since we ran DD?

The EFI partition on each drive have the same UUID. You should be able to confirm that tby using “lsblk -f” and see what drive is currently mounted to /boot/efi.

You’ll only be using one of the EFI partitions at a time, but all the drives have one, so even if a drive goes down you still have an EFI partition for booting.

I’ve run into this and it certainly broke my boot; One of my drives went down for some reason and the other was fine (this is a RAID1 config)… the system booted, but ubuntu just got ‘stuck’ loading up, unable to mount the local file systems due to the entry in /etc/fstab explicitly pointing to the downed disk.

I think there is a much simpler solution, at least for ubuntu.

make sure EFI partitions are on the both disk and marked as EF00

one should be mounted as /boot/efi

the second one can be mounted to /boot/eficopy

run dpkg-reconfigure grub-efi-amd64

and mark both partitions as grub install devices.

that’s it.

You also have to add “nofail” to the mount options for the /boot/efi entry in /etc/fstab

Using this method, both drives have the same ID, so there is only one /boot/efi entry in fstab. If one is down, the other will be used. So no, you don’t have to add nofail, unless you for some reason don’t want an error if both drives are gone.